How Reliable Is a Fingerprint? The Science, the Technology, the Limits, and What No One Explains Clearly Enough in a Courtroom

The question I have been asked more consistently across three and a half decades of forensic work than almost any other is also, superficially, the simplest to answer: how reliable is a fingerprint? The simplicity is deceptive. Behind it sits a tangle of embryology, statistics, image processing, cognitive science, and courtroom epistemology that most introductions to the topic flatten into a confidence the evidence does not fully support. This article, which draws on the published paper I co-authored with Dr. Maria-Louise Morgott through the International Institute of Forensic Expertise, attempts to give that question the treatment it actually deserves, moving from the biological foundations of fingerprint uniqueness through the technical processes by which latent prints are recovered and compared, into the statistical framework that governs how certainty should and should not be expressed, and arriving, finally, at the questions of cognitive bias and judicial communication that determine whether the discipline serves justice or merely appears to.

To be clear at the outset: fingerprint evidence is real, its biological foundations are sound, and when handled correctly it remains one of the most powerful identification tools available to forensic investigators. The argument here is not that fingerprints are unreliable. The argument is that the gap between what fingerprint evidence can legitimately claim and what it is routinely claimed to be in courts of law is wide enough to have cost innocent people their freedom, and that gap is not closing fast enough.

Biology First: Why Fingerprints Are Unique at All

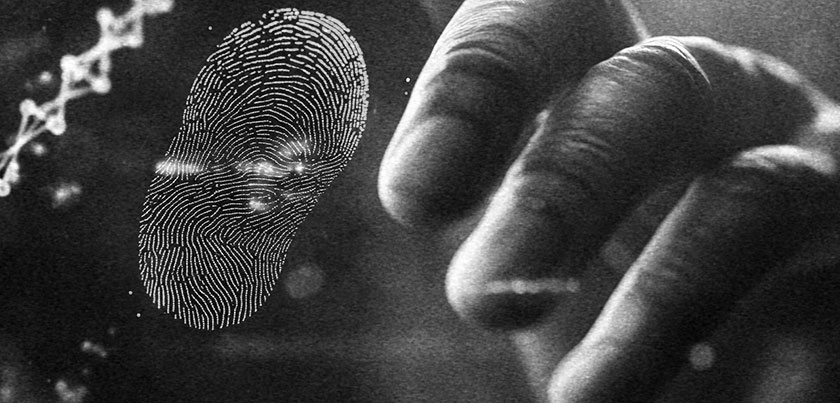

The distinctiveness of fingerprint patterns is not a forensic convention or a statistical approximation. It is a biological fact grounded in the developmental mechanics of the human fetus, and understanding it at that level is essential to understanding both why fingerprint evidence is powerful and what its actual limits are.

Friction ridge skin, the raised dermal structure that produces fingerprint patterns, begins forming between the tenth and sixteenth week of gestation, with the full configuration established by approximately the twenty-fourth week. The process is governed partly by genetics, as the overall class of pattern, whether a given finger will carry arches, loops, or whorls, is strongly heritable and can be predicted with some probability from parental fingerprints, but the specific minutiae, the individual ridge endings, bifurcations, enclosures, and short ridges that constitute the forensically meaningful detail, are shaped by a cascade of developmental stochasticity that makes their precise configuration unpredictable even from genetically identical source material. This is the reason that identical twins, sharing a genome, do not share fingerprints. The same genome, expressed through development under conditions of differential amniotic pressure, differential blood flow, differential fetal position, and dozens of other microenvironmental variables, produces ridge configurations that diverge at the level of specific minutiae even while preserving the overall pattern class (MedlinePlus Genetics, National Library of Medicine, 2023, Are fingerprints determined by genetics?).

The developmental biology also explains the permanence of fingerprint patterns across a lifetime under ordinary conditions. The ridge structures sit in the dermis, beneath the epidermis, anchored by the papillary layer of the skin. Superficial damage to the epidermis, a burn, a cut, an abrasion, temporarily disrupts the surface expression of the ridges but does not alter the underlying papillary layer from which the pattern regenerates. Only injuries deep enough to destroy the papillary layer itself produce permanent alteration, and such injuries are typically severe and medically conspicuous. This permanence, combined with developmental uniqueness, is the biological foundation on which a century of fingerprint evidence in courts of law rests. The biology has not materially changed, and it is solid. What has changed, and what occupies the more interesting forensic territory, is our understanding of what happens between the fingertip and the courtroom conclusion.

The Print Left Behind: Recovery, Enhancement, and the Decisions No One Talks About

Not all fingerprints are equal as evidential material, and the type encountered most often in criminal investigations is precisely the type most subject to degradation, distortion, and interpretive challenge. A friction ridge impression left by eccrine sweat secretions on a smooth surface is invisible to the naked eye, existing as a residue of water, amino acids, chlorides, fatty acids, and proteins deposited in a ridge pattern that can survive for minutes or for decades depending on the surface, the environment, and the chemical composition of the secretion itself. Recovering this latent print, enhancing it to a state suitable for comparison, and then comparing it to a known standard: each of these stages introduces variables that the apparent simplicity of courtroom testimony almost never communicates adequately.

The choice of development technique is itself consequential, and the investigator who makes it forecloses alternatives that could have been informative. Aluminum or iron oxide powder dusting remains the most widely deployed method in the field, appropriate for smooth non-absorbent surfaces and capable of producing ridge-by-ridge visualization when the print is of sufficient quality and the surface contrast permits. Cyanoacrylate fuming deposits a polymer along the amino acid chains in the secretion residue and extends recovery to surfaces where powder adherence is insufficient. Ninhydrin and related reagents react with the amino acids in the residue on porous surfaces such as paper. None of these techniques is without cost: each changes the physical state of the evidence, and the sequence in which they are applied demands experienced judgment, because some techniques destroy the residue that others require and the decision is irreversible.

Where conventional methods reach their limits, multispectral and hyperspectral imaging has emerged as the most consequential technological advance in latent print recovery of the past two decades. By illuminating the print surface sequentially across wavelengths ranging from ultraviolet through visible light to near-infrared, and capturing the differential reflectance and fluorescence responses at each wavelength, these systems can reveal ridge detail invisible to conventional photography on surfaces whose optical complexity would otherwise defeat analysis entirely, including patterned textiles, pigmented plastics, and multi-component surfaces where conventional powder would produce undifferentiable background. The theoretical principle is straightforward: the chemical composition of the latent residue produces different optical responses from those of the substrate at particular wavelengths, and separating them spectrally allows visualization of a pattern that would otherwise be indistinguishable from its background.

In practice, the technology adds a layer of decision-making about which wavelength combination to deploy, how to interpret the resulting images, and, critically, when digital enhancement has clarified genuine detail and when it has introduced artefact. Digital image processing, now standard in preparing latent print images for comparison, encompasses operations ranging from simple contrast adjustment through frequency-domain filtering to AI-assisted ridge reconstruction. Applied appropriately, these operations reveal genuine detail that would otherwise be lost. Applied carelessly or under pressure to produce a usable image from inadequate source material, they can introduce ridge details that were not in the original print or obscure ambiguities that should be preserved as honest limitations. There is no universally accepted protocol specifying which operations are permissible, under what circumstances, and how the processing history must be documented, and this absence of standardization is one of the less-discussed failure modes of the discipline.

ACE-V: The Method, the Promise, and the Structural Vulnerability

The forensic community’s standardized approach to fingerprint comparison is designated ACE-V, an acronym for Analysis, Comparison, Evaluation, and Verification, developed with the explicit intention of imposing systematic structure on what had previously been a largely implicit and individually variable process. Understanding what ACE-V actually does, rather than what the acronym implies, is necessary to evaluating both its genuine strengths and its structural vulnerabilities.

In the Analysis phase, the examiner evaluates the latent print in isolation, before seeing any known print for comparison. The purpose is to assess the print’s quality and to determine what detail is reliably present, identifying the level of detail available across three hierarchical levels: the overall pattern class at Level 1, the specific ridge path characteristics including individual minutiae at Level 2, and the finest detail including pore location and edge morphology at Level 3. The examiner then decides whether the print contains sufficient reliable detail to support a meaningful comparison, and if not, the process stops. This phase is the most important in the entire sequence for the purpose of constraining cognitive bias, because it commits the examiner to an assessment of the latent print’s characteristics before she has seen the known print that will be used for comparison. When this phase is skipped or compressed, as it frequently is when operational pressure favors quick results, the entire subsequent analysis is contaminated by knowledge of what the known print looks like.

The Comparison phase places the latent and known prints in juxtaposition and examines the correspondence and discordance of features. The Evaluation phase produces one of three possible conclusions: identification, meaning the examiner concludes the prints share a common source; exclusion, meaning the prints demonstrably did not share a common source; or inconclusive, meaning the available detail is insufficient to support either conclusion. The Verification phase requires a second examiner to independently repeat the ACE portion of the process and reach her own assessment.

The word independently in that last sentence contains the critical vulnerability. Blind verification, in which the second examiner is genuinely unaware of the first examiner’s conclusion before conducting her own analysis, is the only form of verification that provides independent corroboration. Non-blind verification, in which the second examiner knows what her colleague concluded before beginning, is functionally a review of a conclusion rather than an independent test of it, and the cognitive science literature is clear that knowing the expected answer dramatically increases the probability of arriving at it, regardless of the underlying evidential basis. Langenburg, Champod, and Wertheim demonstrated in 2009 that when examiners knew the first examiner’s conclusion before beginning their verification, the confirmation rate was significantly higher than when they worked blind, without any change in the actual evidential material (Langenburg, G., Champod, C., and Wertheim, P., 2009, Testing for potential contextual bias effects during the verification stage of the ACE-V methodology when conducting fingerprint comparisons, Journal of Forensic Sciences, 54, 571-582). Despite this, blind verification remains far from universal in operational practice.

A further structural problem is the absence of a universal standard for the minimum number of corresponding minutiae required before an identification conclusion is permissible. Australia requires twelve points of correspondence; France and Italy require sixteen; Brazil and Argentina require thirty. The United States has no mandated threshold, leaving the determination entirely to the examiner’s judgment. This means that an identification made by a US examiner and an inconclusive conclusion reached by a Brazilian examiner could be based on the same latent print, and both would be consistent with their respective professional standards.

What the Error Rate Studies Actually Tell Us

The most consequential empirical advance in understanding fingerprint examination reliability came from a landmark study published in the Proceedings of the National Academy of Sciences in 2011, designed to produce the most ecologically valid error rate estimates the profession had seen. Ulery, Hicklin, Buscaglia, and Roberts recruited 169 latent print examiners from federal, state, and local agencies and presented them with 744 fingerprint pairs under blinded conditions that prevented the examiners from knowing they were being tested, and therefore from behaving differently than they would in ordinary casework.

The false positive rate was 0.1 percent across the study population, six false positive identifications out of 4,083 non-match comparisons. The false negative rate was 7.5 percent (Ulery, B. T., Hicklin, R. A., Buscaglia, J., and Roberts, M. A., 2011, Accuracy and reliability of forensic latent fingerprint decisions, Proceedings of the National Academy of Sciences, 108, 7733-7738). These numbers require careful interpretation that they rarely receive in courtroom contexts.

The 0.1 percent false positive rate is not the population-wide probability that any given identification is erroneous. It is the probability that an examiner who decides to make an identification call, on a print of the difficulty range used in this study, is making an erroneous one. The difficulty range was not the hardest cases seen in operational practice. A separate 2014 study by the Miami-Dade Police Department, using a different design, produced a false positive estimate of approximately three percent, a figure that PCAST cited in its 2016 report as suggesting false positives may occur as frequently as one in eighteen cases under some operational conditions (President’s Council of Advisors on Science and Technology, 2016, Forensic Science in Criminal Courts: Ensuring Scientific Validity of Feature-Comparison Methods). The discrepancy between the two estimates has generated substantial methodological debate, and what the discrepancy itself demonstrates is that the field does not yet have a stable, universally accepted error rate for fingerprint examination.

Presenting any single number to a court as if it were the settled scientific answer to the reliability question misrepresents the state of knowledge. That this happens regularly is one of the discipline’s most persistent failures.

From Evidence to Opinion: Statistics and What Courts Actually Hear

The translation of a fingerprint comparison into a courtroom statement is where forensic science and legal communication most frequently diverge in ways that are consequential for adjudication. The vocabulary of forensic certainty has historically operated in categorical terms: identified, excluded, inconclusive, with identification presented as a determination so close to certain that error probability was treated as effectively zero. The National Academy of Sciences documented in 2009 that this framing is scientifically indefensible. The PCAST report reinforced that conclusion in 2016. No pattern-comparison method produces a categorical statement of common source with a zero error rate, and the empirical literature has established that the rate is non-zero.

The statistically defensible alternative is the likelihood ratio framework, which quantifies how much more probable the observed correspondence between the latent and known print would be if they shared a common source than if they did not, given what is known about the distribution of fingerprint features in the relevant reference population. A likelihood ratio of ten thousand means that the observed correspondence is ten thousand times more probable under the hypothesis of common source than under the hypothesis of chance coincidence. This is a meaningful statement of evidentiary weight that can be combined with prior probabilities to reach a posterior probability of common source, and it is the framework that most rigorous forensic statisticians regard as the correct way to express fingerprint evidence.

The practical challenge is that most operational fingerprint laboratories do not calculate likelihood ratios for routine casework, most fingerprint examiners are not trained to calculate them, and most courts are not accustomed to receiving fingerprint evidence in probabilistic terms. The discipline is in transition, with the NIST Forensic Science Standards program working toward operational statistical reporting frameworks, but the transition is slow and incomplete. In the meantime, the gap between what the evidence supports and what the courtroom hears remains real and consequential.

What a jury typically hears is this: the fingerprint examiner examined the latent print from the scene and the known print from the defendant, and she concluded they match. The scientific reality is that this statement, without quantification of the probability, without characterization of the quality and completeness of the latent, without acknowledgment of the error rate literature, conveys substantially more certainty than the evidence supports.

AFIS, AI, and the Automation Gap

Automated Fingerprint Identification Systems have transformed the scale at which fingerprint evidence can be deployed, moving the discipline from a world in which a given latent print could be manually compared against a few hundred known prints, to one in which it can be searched against databases containing hundreds of millions of exemplars in seconds. The Next Generation Identification system maintained by the FBI, and its national equivalents in Germany, the United Kingdom, France, and elsewhere, represent the largest organized collections of biometric identification data ever assembled.

What AFIS does, and what it does not do, are both important. The system compares encoded fingerprint data, not raw images, producing a ranked list of candidate matches above a configurable threshold score. It is explicitly not an identification system. No AFIS in routine operational use declares an identification: the system produces candidates, and a human examiner must then evaluate those candidates using ACE-V to reach an identification conclusion. The system’s output is the starting point for human analysis, not its end point.

This architecture has an important implication for cognitive bias. When AFIS returns a candidate list that places a specific individual at rank one with a high similarity score, the human examiner who reviews that candidate is not approaching the comparison as a neutral analytical exercise: she has been primed by the system’s ranking. The candidate with the highest score is the one she compares first, and she works under an implicit expectation, reinforced by the AFIS result, that this is the likely match. The contextual contamination that Dror documented across forensic disciplines applies with equal force to AFIS output, and the effect of AFIS ranking on subsequent examiner conclusions is an area whose findings have not yet fully penetrated operational practice.

Artificial intelligence is now being applied at multiple points in the latent print workflow, from automated quality assessment through AI-assisted ridge enhancement to deep learning-based matching algorithms that in NIST benchmarks have in some conditions outperformed traditional minutiae-based AFIS. The practical challenge, as with AFIS, is the translation of controlled-benchmark results to the operational environment. AI systems trained on available data may systematically underperform on print types or surface substrates underrepresented in their training sets, and there is currently no established framework for characterizing these operational performance gaps before a technology is deployed in casework.

Cases From the Field: What the Difficult Ones Actually Look Like

The cases that most illuminate the real operational challenges of fingerprint evidence are not the identifications from a clear print on a dry smooth surface, which work reliably and present little analytical difficulty. They are the cases where the print is partial, distorted by the deformation of the finger against the substrate, degraded by water or heat or contamination, or deposited on a surface whose optical properties defeat standard recovery.

A burglary case I worked years ago involved a latent print recovered from a rain-wetted window frame. The image showed sufficient ridge detail for a meaningful comparison, but the distortion introduced by water contact had displaced ridge segments in ways that made their relationship to neighboring ridges ambiguous. The comparison to the suspect’s known print showed a set of corresponding features and a set of apparent discordances that, depending on how the distortion was modeled, could support or contradict common source. The honest conclusion, arrived at after careful analysis, was inconclusive: the available detail was insufficient to resolve the ambiguity. What a less careful examiner, or one under operational pressure to produce a definitive result, might have done with that print is an open question that the absence of documented decision standards makes impossible to answer.

A murder case involving multispectral recovery from a patterned textile illustrates the technology’s power and its demands simultaneously. The print was invisible under all conventional lighting conditions and standard powder development on the fabric produced undifferentiable background. Multispectral imaging at a wavelength in the near-ultraviolet revealed ridge structure consistent with a fingerprint’s Level 1 and Level 2 characteristics. Two examiners disagreed on the conclusion, one reaching identification and one reaching inconclusive. A third examiner, working blind without knowledge of the first two conclusions, also reached inconclusive. The case turned on DNA evidence, and the fingerprint identification did not proceed to trial, which was the appropriate outcome given the evidential state of the print. In a jurisdiction without blind verification procedures, the disagreement might never have been surfaced.

The Ethics of Certainty

The ethical dimension of fingerprint evidence presentation is not a soft addendum to the technical science. It is embedded in it, because the scientific epistemology of fingerprint examination requires probabilistic rather than categorical expression of conclusions, and the failure to communicate this accurately to courts constitutes scientific misrepresentation regardless of any individual examiner’s intent.

A fingerprint examiner who testifies that she has identified the defendant as the source of the latent print from the crime scene, and that there is no possibility of error in this identification, is making a claim the scientific literature does not support. The 2009 National Academy of Sciences report stated this explicitly. The 2016 PCAST report stated it again. The courtroom vocabulary has not changed as fast as the scientific understanding has developed, and the distance between the two is where people have been wrongly convicted.

The discipline’s value does not decrease when expressed honestly with its limitations. Fingerprint evidence is strongest when it is part of a constellation of evidence, a reliable identification expressed with appropriate statistical qualification and corroborated by DNA, digital traces, behavioral evidence, and witness testimony that independently supports the same conclusion. Honest uncertainty communication is the precondition for rational evidential weight assessment. And rational evidential weight assessment is what justice requires from forensic science, not more, and not less.

The full technical and statistical analysis underlying this article is available in Rauscher and Morgott (2025), referenced below.

References

- Dror, I. E., Charlton, D., and Peron, A. (2006). Contextual information renders experts vulnerable to making erroneous identifications. Forensic Science International, 156(1), 74-78.

- Langenburg, G., Champod, C., and Wertheim, P. (2009). Testing for potential contextual bias effects during the verification stage of the ACE-V methodology when conducting fingerprint comparisons. Journal of Forensic Sciences, 54(3), 571-582.

- MedlinePlus Genetics, National Library of Medicine (2023). Are fingerprints determined by genetics? National Institutes of Health.

- National Research Council, National Academy of Sciences (2009). Strengthening Forensic Science in the United States: A Path Forward. National Academies Press.

- President’s Council of Advisors on Science and Technology (2016). Forensic Science in Criminal Courts: Ensuring Scientific Validity of Feature-Comparison Methods. Executive Office of the President.

- Rauscher, G. A., and Morgott, M.-L. (2025). Forensic Fingerprint Analysis: Evaluating Scientific Foundations, Technological Innovations, and Judicial Implications. International Institute of Forensic Expertise, Starnberg. https://doi.org/10.5281/zenodo.15101173

- Ulery, B. T., Hicklin, R. A., Buscaglia, J., and Roberts, M. A. (2011). Accuracy and reliability of forensic latent fingerprint decisions. Proceedings of the National Academy of Sciences, 108(19), 7733-7738.