When Tehran Writes and Brussels Stays Silent

On an encryption tool that emerged from a certain kind of rage, on letters from Iran that gave me a more honest picture of my own world, and on the quietly shared longing of bureaucrats in Brussels and mullahs in Tehran for a world in which only they are permitted to read.

My phone is usually off. Not always, not entirely switched off from the world, but more often than before, and sometimes I do not carry it at all, which is its own chapter and a subject I will address at length elsewhere, specifically in a book I am currently finishing whose working title is “The Hamster Wheel.” Anyone wondering why a person who works in a technological field and runs an AI that filters his calls would be the same person who switches off his phone should know that I do not consider these two things contradictory. Technology I control is useful, and technology that controls me is the hamster wheel. More on that another time.

On an afternoon in March I was sitting by the small artificial lake in Parco Sempione in Milan, on one of the benches not far from the Ponte delle Sirenette, that nineteenth-century cast-iron bridge over which 4 wrought-iron mermaids keep watch, as if the city were convinced that a pond could be made safer through mythological supervision. Bandit, my Malinois, was sitting beside me, or more precisely: he was not sitting but standing, as Malinois stand, with that charged attention which in this breed constitutes the resting state, and he was staring into the water. He was fighting, visibly, with his water shyness, that irrational reservation he maintains toward anything that moves and is wet, and he was losing this fight with a dignity I found admirable. The ducks had no interest in him, the water had no interest in him, and he, who normally wants to control everything, stood there and waited for the situation to resolve itself.

I had the phone off, as usual. At some point I switched it on, waited for the messages to come through, and read, and what I read came from Tehran.

An Iranian, evidently technically fluent, wrote to me in English, measured, precise, without preamble, and asked whether my Encryptor tool was secure enough to encrypt files that authorities should not be able to decrypt. Not with the kind of naivety that describes a wish, but with a level of technical literacy that told me this person understood exactly what they were asking, and that the question was not posed out of curiosity but out of necessity. When someone from Iran asks this question, it is not an academic inquiry. The Freedom House index rates Iran at an overall score of 10 out of 100 in its 2026 Freedom in the World report, placing it among the most restrictive digital environments on earth (Freedom House, 2026, Freedom in the World). The internet freedom score stands at 13 out of 100, and by the estimate of the American government, the Iranian regime spends at least 4 billion dollars annually on suppression and internet censorship, which is not a budgetary error but policy conviction expressed in a number.

Bandit glanced over at me briefly, as if he could sense the shift in posture that an interesting email produces in me, then turned back to the water, which had still not persuaded him. I wrote back, and then more letters came.

The Volume I Had Not Anticipated

What I experienced in the days and weeks that followed was not a metaphorical flood but a measurable, concrete accumulation of inquiries that told me something I had not built into my expectations: that this small tool, published on GitHub without ceremony, without a press release, without a marketing budget, and without asking anyone’s permission, had reached people in situations where the difference between something working and something failing is no longer a matter of convenience. Letters from Iran. From countries whose names appear in European newsrooms mainly when elections are being contested or bombs are falling. From journalists who take source protection seriously. From people who wrote to me not about their own files, but about files belonging to someone else for whom a mistake carries consequences that I would not wish on anyone to experience.

And alongside these came the other kind: the expressions of praise that honored me and simultaneously unsettled me, because for me praise has always been a diagnostic category as much as a compliment. When someone praises a tool, they reveal to me which deficiency that tool has addressed. And what the letters, the emails, the messages through various channels told me was essentially the same thing: that someone had been searching for an alternative that does not depend on a company sitting inside some jurisdiction that can give a government access on request, and that they had not found one until they came across my Encryptor.

The praise honors me, and I say that without the cold deflection with which certain scientists receive approval, as though finding it agreeable were an intellectual weakness. But the praise also troubles me, because it shows me how deep the gap is that it names. When people from Iran contact a forensic expert in Bavaria to understand whether his open-source browser tool can withstand state decryption attempts, this is less a compliment for the forensic expert than it is a finding about the condition of the digital world these people are required to live in.

Why This Tool Exists At All

Before I explain how the Encryptor functions, I need to explain why it exists, because one of these things is incomplete without the other. I did not build it because a business model occurred to me. I did not build it because an investor knocked on my door. I built it because at a certain point in my engagement with European data protection legislation, digital surveillance infrastructure, and the systematic denial of genuine privacy to ordinary citizens, I reached a position where anger was a more productive response than commentary.

For more than 25 years I worked as a court-appointed expert. I sat in investigation rooms, I evaluated digital material, I saw how authorities work with what is legally accessible to them, and I saw simultaneously how little citizens understand about what of theirs is accessible, in which form, and under which conditions. This asymmetry between state knowledge of access and citizens’ ignorance of access is not an oversight in the system, it is the system itself, and against systems I cannot change, I build tools.

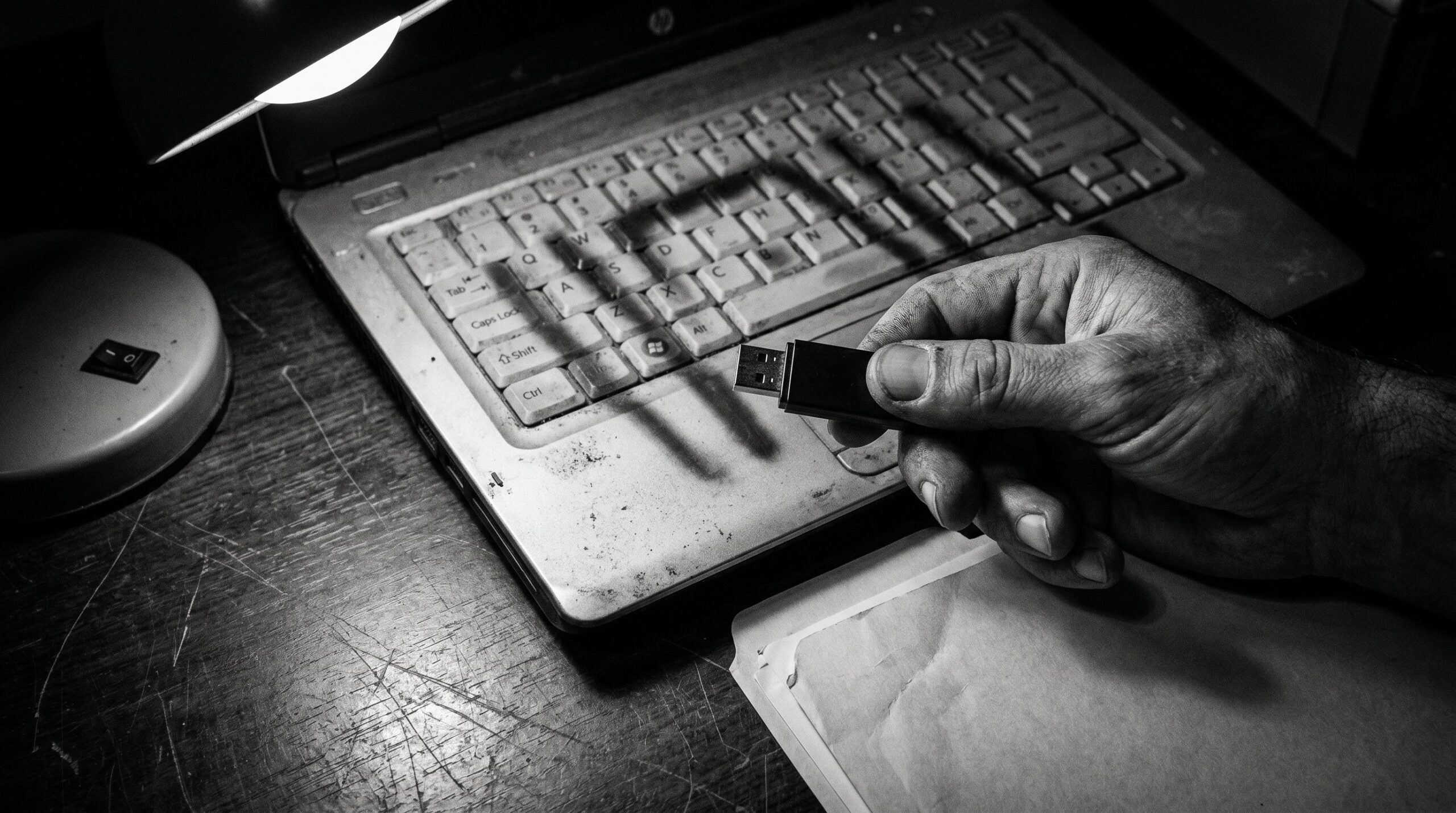

The Encryptor is publicly accessible on GitHub, open source, and costs nothing. It will remain free. Not because generosity strikes me as a particular virtue, but because the moment a security tool depends on a payment model is the moment at which those who need it most no longer have access. Someone in Tehran with a compromised laptop does not pay for software using a credit card registered in their own name. That is so obvious that it makes me think every time someone asks me why the tool is free.

What the Encryptor Actually Is, and What It Is Not

I want to start with the most common misunderstanding, because it is the foundation for all further questions. The Encryptor is not a VPN. It is not anonymization software. It does not conceal IP addresses, it does not reroute internet traffic, it does nothing that makes you invisible on the network. It does something else, and in certain situations it does something more important: it makes files unreadable to anyone who does not know the password.

That sounds simple, but it is not. The question of what exactly “unreadable” means in a cryptographic sense is one I have had to answer more often than I expected, and I will answer it here as precisely as the absence of mathematical notation allows, meaning precisely enough that someone who has not studied computer science understands what happens, without me becoming imprecise in the process. Because imprecision here is the worst thing I could offer, which is to sell false security.

The technical foundation of the Encryptor consists of 2 algorithms that can be described as the standard equipment of modern cryptography, not because someone has placed them on a marketing slide labeled “military grade,” though that description is also accurate, but because they belong to the small number of methods that after decades of intensive cryptographic research show no practically exploitable attack path.

The first is AES-256-GCM, the Advanced Encryption Standard with a 256-bit key operating in Galois/Counter Mode. AES was ratified by the American National Institute of Standards and Technology in 2001 as the national encryption standard and has since become the de facto standard for symmetric encryption in governments, militaries, financial institutions, and technology companies worldwide (NIST, 2001, Announcing the Advanced Encryption Standard, FIPS 197). A 256-bit key means that there are 2 to the power of 256 possible key combinations. This number is sufficiently large that no supercomputer foreseeable within any planning horizon could approach it by systematic exhaustion. To make this concrete: if one were to recruit every atom in the observable universe as a computing unit and let each of them calculate for 14 billion years, the result would come no closer to a brute-force solution against AES-256 than it was on the first day. That is not enthusiasm for one’s own software, but mathematics that does not negotiate.

The second algorithm is PBKDF2, the Password-Based Key Derivation Function 2, implemented with 100,000 iterations and a unique random salt generated for each individual file. What PBKDF2 does is the following: it takes the password the user enters, an ordinary passphrase like any other, and transforms it into the cryptographic key that AES-256 then uses to actually encrypt the file. The reason one does not simply take the plain SHA hash of the password for this transformation is straightforward: because a direct hash is fast to compute, and anything fast to compute can be tried quickly by an attacker. PBKDF2 makes this process deliberately slow by applying the hash function 100,000 consecutive times, so that computing a single key takes a measurably longer time, imperceptible to the user but slowing a brute-force attacker who tries millions of passwords per second by a factor of 100,000. The OWASP, the Open Web Application Security Foundation, described 100,000 iterations as the minimum standard at the time of implementation (OWASP, 2023, Password Storage Cheat Sheet), with more recent guidance moving the recommendation considerably higher, a development I will incorporate into future versions.

What makes the architecture distinctive is not only the choice of these algorithms, which would already be solid and sufficient on its own. What makes it distinctive is the zero-knowledge architecture of the entire system. The Encryptor runs no server, maintains no database, and transmits nothing to the internet, and it does not know your password and structurally cannot know it, because the entire cryptographic process, both encryption and decryption, runs exclusively in the working memory of your browser and disappears completely when you close the tab. I use the browser’s native Web Crypto API, meaning the cryptographic functions that Google Chrome, Firefox, and Safari already have built in and that these companies maintain and audit, rather than incorporating an external library that I cannot control.

The architecture has no third-party dependencies and no installable packages that need to be downloaded from external repositories. What exists is a single file called index.html, which can be opened offline, without an internet connection, on an air-gap system that has no network access at all, which for some of the people who write to me is not a theoretical possibility but a practical precaution.

What This Means When You Are Writing From Tehran

I want to return to the Iranian letters now, because the technical context I have just described is incomplete without them.

Iran has significantly intensified its surveillance of the population since June 13, 2025, immediately following the Israeli strikes on Iranian nuclear facilities, and has further restricted access to international networks (Internet Censorship in Iran, Wikipedia, 2026). Since January 2026, a nationwide internet shutdown has been in effect that is considered the longest near-total internet cutoff in the country’s recorded history, with approximately 11 million Iranians dependent on Psiphon VPN bridge connections to maintain any connection to the outside world at all (Iran’s January 2026 Internet Shutdown, arXiv, 2026). The state has now introduced what it calls “Filternet Plus,” a state-controlled intranet that replaces free international traffic for most citizens, while a small group of registered users retains unrestricted access through so-called white SIM cards, among them parliamentary representatives, state journalists, and government officials. The pattern is one history has seen before.

In this context, the question of whether an AES-256-GCM-encrypted tool can be broken by state hardware is not a theoretical question but a very practical one, and the answer is: no, not through brute-force computation. No state hardware in existence, including that of the NSA, GCHQ, or the Iranian intelligence apparatus, can break AES-256 with correct implementation and a strong password through exhaustive search. That is the consensus of the international cryptographic research community, not my personal assessment. What can be broken is the password, if it is weak, and the human component, if someone is placed under sufficient pressure to disclose it. The mathematics itself is intact.

What touches me in the letters from Iran, and I say that with the composure of someone who has worked for courts and investigative authorities for more than 25 years, is not the flattery of someone using my tool, but the implicit statement behind each of these inquiries: that this person has a file they need to encrypt because it is dangerous for someone else to read it. That is not an abstract privacy discussion of the kind conducted in German talk shows over a glass of white wine. That is concrete reality with concrete consequences for concrete human beings.

Brussels, Attempting to Regulate Mathematics

And now comes the part that makes me want to stand up from my desk, although I do not smoke and therefore have no cigarette as a pretext: Europe.

Because while Iranians write to me asking whether their state can read their encrypted files, a legislative apparatus in Brussels has been at work since 2022 trying to answer the European version of this question, and in the opposite direction. The so-called Chat Control, officially the Proposal for a Regulation to Prevent and Combat Child Sexual Abuse, or CSAR, is the most ambitious European project to date for systematically undermining encryption, and it has enjoyed the distinction of being declared politically dead several times before reappearing each time in a slightly different suit (Wikipedia, 2026, Chat Control).

The core concept was as simple as it was alarming: all private messages, all files, all transmitted content would be searched automatically for illegal material before encryption, that is, still on the user’s device. This is called client-side scanning, and it means in plain language that what appears technically to be encrypted communication is in fact not encrypted at all, because the user’s device functions as a small police auxiliary that looks and reports before the encryption takes effect. The Electronic Frontier Foundation called the Chat Control plans “existentially catastrophic for encryption” (EFF, 2025). Signal stated that the company would leave Europe before implementing backdoors (Computer Weekly, 2025). The European Court of Human Rights found in February 2024 in the case Podchasov v. Russia that attempts to weaken encryption or create backdoors constitute a violation of the right to privacy. Russia against Russia, as one might say, and that ruling echoes oddly in Brussels.

On March 26, 2026, the European Parliament rejected the extension of the first Chat Control iteration by a single vote, by the narrowest possible margin, while the second iteration, commonly called Chat Control 2.0, remains under negotiation, with the Polish Council Presidency of 2026 providing fresh momentum. The pattern is familiar. One dies three times, and at the fourth attempt one returns wearing a new hat.

What I Saw in Those Years, and Why the Argument Does Not Hold

At this point I need to say something that is conspicuously absent from the public debate about Chat Control, namely the perspective of someone who has not spoken about child abuse in a Brussels commission room but has held the hard drives in their hands.

From 1998 to 2000 I worked on private contracts, examining corporate networks after intrusions, analyzing and locking out hackers, reconstructing attacks, and cleaning systems infected with malware, and from 2000 onwards I worked as a court-appointed expert evaluating seized computer systems for prosecution authorities and investigative agencies, over several years, systematically, within what were at the time still comparatively new structures of digital forensics. What I found on these systems has stayed with me to this day, in a way that does not fade and does not soften, and I choose my words here carefully, because the subject demands it. We referred to incrementally examined storage media, meaning physical carriers examined layer by layer, directory by directory, for relevant material, and what they contained in some cases exceeded a hundred thousand individual files, images and video material, content against whose existence one develops no habituation, no matter how many hours one has spent with it.

The video examination followed a method that sounds straightforward from the outside and was anything but from the inside: we used a slider that sampled the material at defined time intervals, because perpetrators had learned to camouflage themselves. A seized drive contained western films, documentaries, innocuous compilations, and the actual material began at minute 36 or minute 42, cut into conventional entertainment in the hope that a glance at the filename and the opening seconds would be enough to mislead an examiner. It was not enough, and we watched everything.

To identify already known files we used PERKEO, an acronym standing for Programm zur Erkennung Relevanter Kinderpornographischer Eindeutiger Objekte, meaning Program for the Detection of Relevant Unambiguously Child Pornographic Objects, which the Federal Criminal Police Office maintained centrally and synchronized with the state criminal police offices. PERKEO operated on the basis of MD5 hash values, meaning digital fingerprints of each individual file, and this mechanism deserves a brief explanation, because it is central to understanding the entire problem. A hash value is a computational signature generated from the complete content of a file, and it does not change as long as the file remains identical, whether it sits on a hard drive in Bavaria or on a server in Bangkok, whether it has been shared once or ten thousand times. Only when someone takes a screenshot of the file or opens and re-saves it in an image editing program does the pixel structure shift slightly, and with it the hash value. Whoever forwards a file unchanged leaves an identical digital trace. At the time, before the darknet, when the exchange of this material took place predominantly through AOL forums, email distribution lists, and newsgroups, this was a powerful instrument, because the files remained identical and the hash values therefore matched. Every hit was a known image, every hit was a child who had been subjected to documented abuse.

I say this not to produce horror. I say it because this experience places me in a position to assess the argument for Chat Control from the inside, and the assessment that results is sharper than any technical paper. The perpetrators who were active then, and the perpetrators who are active today, have one thing in common with Signal, Telegram, and similar platforms: they do not use them for what this is actually about. The real exchange of abuse material took place then through AOL private groups and email chains invisible to outsiders, and it takes place today through the darknet, through encrypted exchange structures, through infrastructures that have about as much to do with mass surveillance of the WhatsApp communications of 450 million Europeans as a western film has to do with what begins at minute 36. Federal criminal police agencies and international partner authorities have made considerable technical progress in the darknet in recent years, including the so-called timing analyses within the Tor network, with which the anonymity of one of the operators of the platform Boystown was broken by identifying his Tor entry nodes (Panorama/STRG_F, 2024, Ermittlungen im Darknet: Strafverfolger hebeln Tor-Anonymisierung aus). That does work, miserably slow and resource-intensive, but it works, because it starts where the perpetrators actually are.

Anyone distributing child sexual abuse material today using Telegram is, with respect, an idiot, and idiots do not constitute the strategic majority in any perpetrator group. Chat Control would have no more effect on these perpetrators than a door lock has on someone entering through the window. What it would produce is a comprehensive infrastructure for mass surveillance of all European communications, declared on the day of its creation to serve one purpose and available on every following day to serve another. I have seen enough to take the child protection argument seriously, and I have seen enough to know that this specific proposal does not deliver it.

What occupies me about this situation, with the precision of a foreign object in the visual field, is the structural resemblance between the European and the Iranian position. Both say the same thing to the citizen: encryption is permissible if we hold the key. Both justify it differently, the former invoking child protection, the latter national security, but the outcome they are reaching for is identical: a world in which only authorized eyes read. The difference between Tehran and Brussels does not lie in the logic of the ambition, but in the clothing placed over that logic, and in the fact that the clothing in Brussels is considerably more expensively tailored.

What I Learned From the Letters of Praise

There are messages that honored me in a way I had not anticipated, because the praise did not come from those from whom praise is ordinarily expected. Not from professional colleagues, not from institutions, but from a journalist in a country whose name I do not write here, who informed me that they had used my tool to secure documentary material before a search of their office, and that the material was intact once the search had been completed and the laptop seized. Or from a woman in another country who wrote to me in English, briefly and without emotional elaboration, that she “places her trust” in my tool, which in the context she described was not a compliment but a transfer of responsibility.

I take this responsibility seriously. That is the reason I explain here what happens under the surface, rather than sheltering behind marketing terms such as “military-grade encryption” which, while accurate, explain nothing. Whoever trusts me should know what they are trusting. Not my professional standing, not a brand name, but mathematics that I did not invent and have not modified, that has been scrutinized and confirmed over decades, and that I have packaged in a form that a person without programming knowledge can open in a browser and use without installing anything, registering with anything, or notifying any service of anything.

The open-source principle is not an ideological position but the only form of trust that retains meaning in a cryptographic context. Someone who tells me their tool is secure but will not show me the code asks me to believe them. Someone who shows me the code, which I can read myself or have read by someone I trust, asks me to trust the mathematics, and that is a request I am glad to honor.

The Otto Sapiens Parenthesis, and What It Reveals

At this point I must mention the Otto Sapiens, because he appears in every one of my reflections eventually, like an inescapable animal one did not invite into the garden but which persists regardless. The Otto Sapiens, that variant of Homo Sapiens that believes itself fully informed after thirty minutes of podcast consumption, is interested in encryption for precisely as long as encryption is in the news cycle. He clicks on articles about Chat Control, finds them “concerning,” shares the link on the single-letter social network, and then orders pizza through an app that tracks his location, his ordering history, his payment behavior, and his habits in real time and forwards all of it to 14 third-party providers, which is stated in the terms of service on page 23, which he has not read because he clicked “I agree,” as always.

I say this not with contempt. I say it as a finding, with that particular smile one develops when one has learned to observe the world with a certain forensic distance. The Otto Sapiens is not malicious, he is comfortable, which is a different category, and he lives within an infrastructure that systematically rewards his comfort while systematically suppressing his awareness of its costs.

What is interesting about the letters from Iran is that there is no room for the Otto Sapiens there. Someone living in a country where a wrong click can result in a visit from the authorities develops a fundamentally different attention to what is happening beneath the surface of digital tools. This attention is not innate, it is compelled, and I find myself wondering, not without a certain forensic coldness, how many people in Europe will develop this attention only once it too becomes compelled.

An Advance Notice For Readers Who Wish to Examine Their Own Indifference

I pause here for a moment, as I do in every text I write at some point, and ask directly: what are you doing with your files? Not hypothetically. Today. The tax return you completed last month, containing your income, your expenditures, your account number: is it in a Dropbox? A Google Drive? An iCloud folder that synchronizes automatically and whose contents became, after what London extracted from Apple, accessible to authorities within a few legal steps? Or does it sit on your computer, unencrypted, because the notion of ever being subject to the scrutiny of others feels comfortingly abstract?

I do not ask this to generate panic. Panic is a tool I find useless, in forensics as in public discourse. I ask because the people in Iran who write to me do not ask this question because they are paranoid. They ask it because they know the answer makes a difference. This knowledge costs them something I would not wish on them. But it also gives them a clarity that the Otto Sapiens of European prosperity is not currently developing, because there is no pressure compelling that clarity. That pressure is not there yet, but it is coming.

The One Thing I Trust Without Reservation

If I reduce all the letters, all the inquiries, all the praise, and all the technical questions of recent weeks to their smallest common denominator, one sentence remains, a sentence I would not have expected from an unknown Iranian when I was writing the Encryptor, and that nonetheless does not surprise me: “I trust mathematics more than I trust any government.”

That is not a political statement but an epistemological one, and it is one to which I subscribe without reservation, not as an expression of hostility toward the state, but from a very simple empirical observation developed over a lifetime of working with numbers. I have been programming since the age of thirteen, I am fifty-six today, and in the 43 years between then and now mathematics has been the one truth that nobody had to teach me, that was simply there, and that has not lied to me once, while governments have proven the opposite.

The Encryptor is therefore not what a security tool is often imagined to be, an emergency measure for extreme situations. It is what a security tool can be when it is not built for the Otto Sapiens of the affluent society, but for the person who has learned that comfort and security are different objectives, and that one purchases the latter by giving up some of the former. Whoever waits for their state to officially confirm the need for encryption is waiting for a confirmation that structurally cannot come, because a state that genuinely does not want to read its citizens does not announce it. It stays silent, and in that silence builds the infrastructure that makes reading possible anyway.

Brussels maintains its silence while Tehran does not, and the difference between these two kinds of silence is smaller than I would have imagined before this spring.

References

- Electronic Frontier Foundation. (2025). Chat Control plans pose existential catastrophic risk to encryption, says Signal. computerweekly.com. Retrieved May 15, 2026.

- Electronic Frontier Foundation. (2026, April 7). EU Parliament blocks mass-scanning of our chats: What’s next? eff.org. Retrieved May 15, 2026.

- Freedom House. (2026). Freedom in the World 2026: Iran country report. freedomhouse.org. Retrieved May 15, 2026.

- NIST. (2001). Announcing the Advanced Encryption Standard (AES) (FIPS 197). National Institute of Standards and Technology. Retrieved May 15, 2026.

- NIST. (2025, January 6). SP 800-38D Rev. 1 (Draft): Pre-Draft Call for Comments: GCM and GMAC Block Cipher Modes of Operation. csrc.nist.gov. Retrieved May 15, 2026.

- OWASP. (2023). Password Storage Cheat Sheet: PBKDF2 iteration count recommendations. cheatsheetseries.owasp.org. Retrieved May 15, 2026.

- Panorama/STRG_F. (2024, September). Ermittlungen im Darknet: Strafverfolger hebeln Tor-Anonymisierung aus [Investigations in the darknet: Law enforcement bypasses Tor anonymization]. NDR/ARD. Retrieved May 15, 2026.

- Wikipedia. (2026). PERKEO-Datenbank. In Wikipedia, The Free Encyclopedia. Retrieved May 15, 2026.

- Wikipedia. (2026). Chat Control. In Wikipedia, The Free Encyclopedia. Retrieved May 15, 2026.

- Wikipedia. (2026). Internet censorship in Iran. In Wikipedia, The Free Encyclopedia. Retrieved May 15, 2026.

- Yifan Liu et al. (2026, March 30). Iran’s January 2026 Internet Shutdown: Public data, censorship methods, and circumvention techniques. arXiv:2603.28753. Retrieved May 15, 2026.