Probability Zero: Why Our Genome Could Not Have Arisen by Chance

A mathematical, informational, and philosophical examination of the most quietly accepted miracle in modern biology

How probable do I consider it that our genome arose by chance? Anyone who has ever read into the brilliance of nature, or of an organism, take the human being, who received it through a course of study, much of which is by now forgotten anyway, knows what I am about to say. How probable is it that it simply came into being? I tell you: it is scientifically not tenable, scientifically not justifiable, and therefore logically not possible. Why is that so? Where do I stand on this myself? Let me explain, because this is not a question that admits of vague answers, and the answer matters more than almost any other question one can ask about what we are.

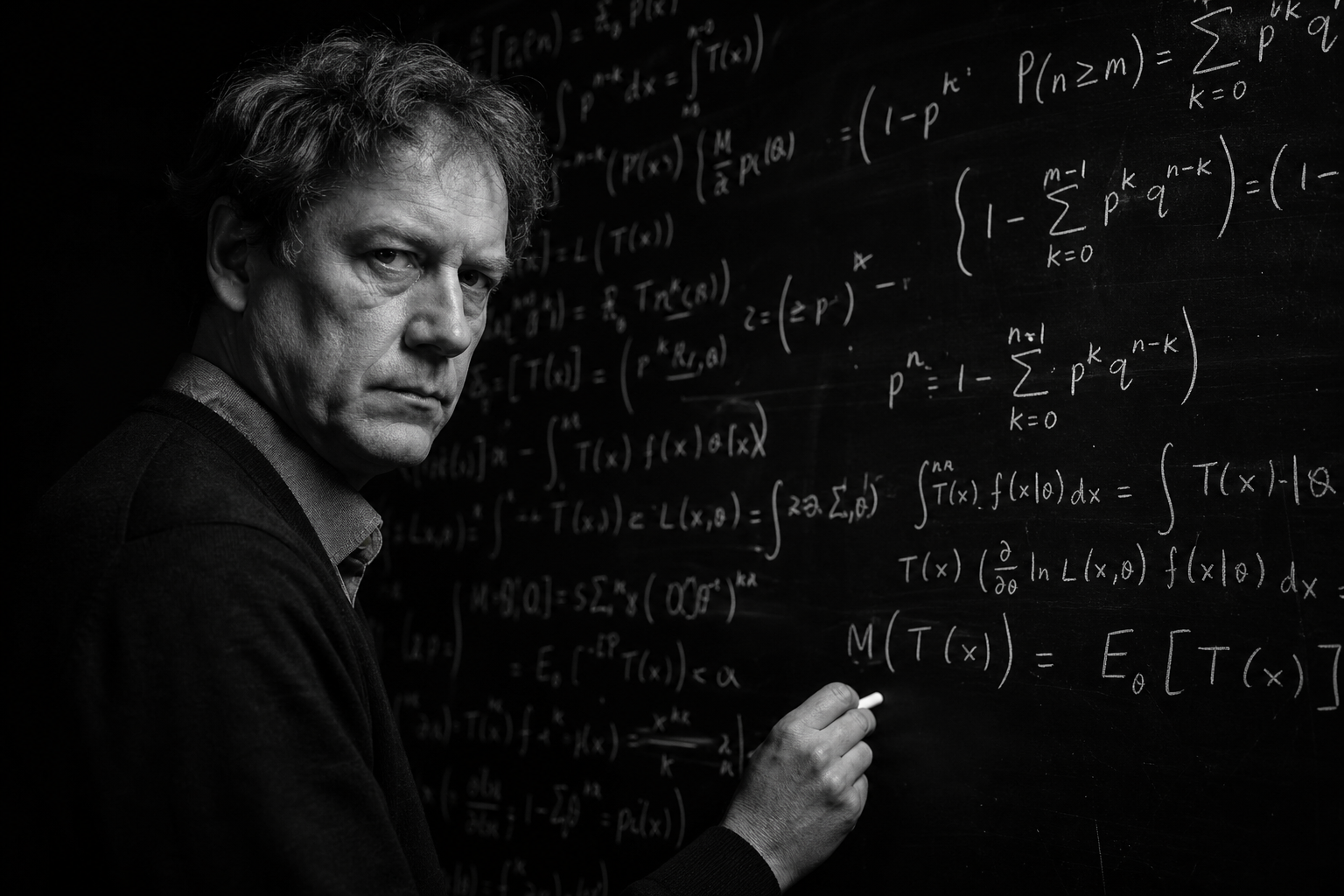

I am going to take you through this carefully. I am going to be a mathematician for a few pages, then a philosopher, then someone trying to think the way Einstein thought, which means refusing to accept the comfortable answer just because it is the consensus answer. The consensus, in matters of the deepest origin of biological information, is wrong. It is wrong not because the people who hold it are stupid, but because they are working within a frame that cannot accommodate the actual numbers. And once you see the numbers, you cannot un-see them.

I will set out my position at the start so that no one has to wonder where I am going. The probability that the human genome arose through a sequence of random chemical events, with or without natural selection acting upon intermediate stages, is so low that it is not a probability in any meaningful sense of the word. It is mathematically indistinguishable from zero. The question that follows is not whether life requires explanation beyond chance, but what kind of explanation is honest enough to confront the actual scale of what needs to be accounted for.

What We Are Actually Talking About

The human genome consists of approximately 3.2 billion base pairs. That is the number you read in popular accounts, and it is correct, but it is also misleading, because the number alone does not convey what it represents. Each of those 3.2 billion positions is occupied by one of four nucleotide bases: adenine, thymine, guanine, or cytosine. Four possibilities at each position. The number of possible distinct DNA sequences of length 3.2 billion is therefore four raised to the power of 3.2 billion.

[math]N_{\text{sequences}} = 4^{3.2 \times 10^9} approx 10^{1.9 \times 10^9}[/math]

I want you to sit with that for a moment, because most people skim past such numbers without grasping what they actually represent. Four raised to the power of 3.2 billion is, when expressed in standard scientific notation, approximately ten to the power of 1.9 billion. That is a one followed by 1.9 billion zeros. If you tried to write it out on paper, the number itself would fill many thousands of books. The number of atoms in the observable universe is a paltry ten to the power of 80, which means the search space of possible human genome sequences exceeds the number of atoms in the universe by a factor of ten to the power of 1.9 billion minus 80. The eighty is, in this comparison, vanishingly negligible. We are not in the same arithmetic neighborhood. We are not on the same continent of arithmetic.

Out of those four-to-the-3.2-billion possible sequences, the overwhelming majority would code for nothing functional, would produce no viable organism, would result in protein assemblies that fold incorrectly, fail to perform their required functions, or kill the organism outright. The number of sequences that would code for a functional human being is a vanishingly small subset of that astronomical search space. We do not know exactly how small, because we cannot enumerate the functional sequences directly, but we have measurements at smaller scales that give us a sense of the rarity, and these measurements all point in the same direction.

This is where the mathematics begins to break down our intuitions, and where most discussions of the origin of life lose their nerve and pivot toward reassuring vagaries. I am not going to do that. I am going to keep pushing.

A Single Protein: Douglas Axe’s Experiment

Douglas Axe, formerly at the Centre for Protein Engineering at Cambridge University, performed a series of experiments published in 2004 in the Journal of Molecular Biology that addressed the following question with experimental rigor: among all the possible amino acid sequences of a given length, what fraction would actually fold into a functional protein (Axe, D. D., 2004, Estimating the prevalence of protein sequences adopting functional enzyme folds, Journal of Molecular Biology, 341, 1295-1315)?

Axe focused on a 150-amino-acid section of the enzyme beta-lactamase, an enzyme that confers antibiotic resistance on bacteria. Using a refined mutagenesis technique, he produced a careful estimate of the ratio between sequences that produce a stable, functional fold and the total set of possible amino acid sequences of that length. His estimate was one in ten to the power of seventy-seven.

[math]P_{\text{protein}} = \frac{1}{10^{77}}[/math]

Let me convert that to language a person can hold in their head. Out of every ten followed by seventy-seven zeros possible 150-amino-acid sequences, only one will fold into a functional protein. The number of seconds in the entire history of the universe is approximately ten to the power of seventeen. The number of atoms in our galaxy is approximately ten to the power of sixty-seven. Even if every atom in our galaxy could try a new amino acid sequence every second since the Big Bang, the total number of trials would still be vastly fewer than would be needed to find a single functional protein by chance, by many orders of magnitude.

And this is for a single 150-amino-acid protein. A modest protein. A representative protein, not an unusually long or complex one. The human body contains approximately one hundred thousand distinct proteins (Ponomarenko, E. A., et al., 2016, The Size of the Human Proteome: The Width and Depth, International Journal of Analytical Chemistry, 7436849), each of which has its own folded structure, its own function, its own role in the larger system. Every single one of those proteins, on Axe’s numbers, is itself a one-in-ten-to-the-seventy-seventh shot. The probability of all of them being assembled by chance is the product of all those probabilities, which gives us a number so small that I will not bother writing it out. The mathematical convention for such numbers is to call them effectively zero.

There are critics of Axe’s specific numbers, and the discussion of his methodology has been ongoing for two decades. Some argue that the rarity is not as extreme as he calculated, that there are clusters of functional sequences in the search space that random mutation could navigate. The debate is technical, and I do not pretend it is settled. But here is the point that gets lost in the technicalities: even if Axe is wrong by a factor of ten to the twentieth power, even if the actual rarity is ten to the fifty-seventh, we are still in a regime where chance cannot do the work being asked of it. Lowering the number by twenty orders of magnitude does not rescue the chance hypothesis. It moves the argument from impossible to impossible, just at a slightly different scale.

A Single Cell: Hoyle’s Calculation

Sir Fred Hoyle, the Oxford astrophysicist, was no friend of religious creationism. He was an atheist who happened to do arithmetic. In his 1982 work co-authored with the astrophysicist N. C. Wickramasinghe, Hoyle calculated the probability that a complete set of approximately two thousand functional enzymes, the minimum required for a self-sustaining cell, could arise simultaneously by random chemical processes (Hoyle, F., and Wickramasinghe, N. C., 1982, Evolution from Space: A Theory of Cosmic Creationism, Simon and Schuster). His result was one in ten to the power of forty thousand.

[math]P_{\text{Hoyle}} = \frac{1}{10^{40{,}000}}[/math]

The number forty thousand is not, here, an exaggeration for rhetorical effect. It is the actual exponent. One followed by forty thousand zeros. The threshold below which mathematicians traditionally consider an event to be impossible within the bounds of the observable universe is ten to the negative fiftieth. Hoyle’s calculation puts the spontaneous origin of even the simplest cell forty thousand orders of magnitude below that threshold.

Hoyle, the atheist, drew the conclusion that a rational man would draw from such a number. He wrote that anyone who follows the calculation directly, without being deflected by fear of the wrath of scientific opinion, must conclude that the order observed in biological materials is the outcome of intelligent design. That word, design, is a charged word in this debate, and Hoyle’s use of it has been seized upon by religious creationists ever since. But Hoyle was not advocating for any God. He was advocating for the conclusion that the math forces. The conclusion was that something that processes information, something that selects from a vast space of possibilities, was required to produce what we observe.

Harold Morowitz at Yale performed a related calculation that produced a still more devastating result. He asked: if you took a large quantity of bacteria, broke every chemical bond in them, and then allowed the atoms to cool and reform new bonds in equilibrium, what is the probability that a living bacterium would emerge at the end? His answer was one in ten to the power of one hundred billion (Morowitz, H. J., 1968, Energy Flow in Biology, Academic Press). One hundred billion is the exponent. Even articulating that number requires a special vocabulary, because human language was not built for these scales.

[math]P_{\text{Morowitz}} = \frac{1}{10^{10^{11}}}[/math]

Murray Eden at MIT calculated the probability of producing functional polypeptide sequences by random trial at one in ten to the power of three hundred and thirteen (Eden, M., 1967, Inadequacies of neo-Darwinian evolution as a scientific theory, in Mathematical Challenges to the Neo-Darwinian Interpretation of Evolution, Wistar Institute Press). Different methodology, different starting assumptions, same conclusion. The numbers from independent investigators converge on a verdict that the chance hypothesis cannot account for what we observe.

The Levinthal Trap: Even the Folding Is a Paradox

Here is something most people do not know, even most biology students do not know, because the textbooks gloss over it. Even after a protein has the correct amino acid sequence, the protein still has to fold into the correct three-dimensional shape in order to function. And the folding itself is, on its face, a paradox.

Cyrus Levinthal pointed this out in 1969 (Levinthal, C., 1969, How to Fold Graciously, in Mossbauer Spectroscopy in Biological Systems, University of Illinois Press). For a typical protein of about 150 amino acids, each amino acid can adopt several distinct rotational positions, which means the protein has approximately ten to the power of three hundred possible conformations. If a protein were to find its correct fold by trying conformations randomly at the rate at which atomic motions occur, the time required to sample even a small fraction of those conformations would exceed the age of the universe by many orders of magnitude.

And yet proteins fold. They fold quickly, in milliseconds to seconds, into precisely the conformation required for their function. The resolution of Levinthal’s paradox, as worked out over the subsequent decades, is that proteins do not fold by random search. They fold along an energy landscape that biases them toward the correct conformation. The amino acid sequence itself encodes not just the final structure but the path to that structure. The folding is guided by physics, but the physics that guides it is exquisitely tuned to the specific sequence in a way that random sequences would not be.

The implication is profound and almost never spelled out. For a protein to fold properly, the amino acid sequence must be such that the energy landscape funnels it toward the correct conformation. Most random sequences would not have this property. They would either not fold at all, fold into multiple conformations none of which is functional, or fold into kinetic traps from which they would never escape. The fraction of amino acid sequences that simultaneously code for a functional protein and fold reliably into the correct conformation is a small subset of the already astronomically small fraction of sequences that could in principle code for a functional protein.

The chance hypothesis must account not just for the existence of functional sequences, but for the existence of functional sequences whose physics also guides them to their functional conformations along an energy landscape that does not get trapped in non-functional intermediates. This is a compounding requirement. It does not add to the implausibility, it multiplies it.

The Interactome: Proteins Must Work Together

Suppose, generously, that you had solved every problem above. Suppose every protein in a cell had emerged by chance with the right sequence and the right folding behavior. You would still have nothing, because individual proteins do not constitute life. Life requires that proteins interact with one another in highly specific ways, that signaling cascades operate in correct order, that metabolic pathways flow without bottlenecks or dead ends, that regulatory networks respond to environmental conditions with appropriate timing.

The interactome, the set of all protein-protein interactions in a cell, is itself a Levinthal-scale problem. McLeish and colleagues, in a 2012 paper in HFSP Journal, examined the combinatorial scale of self-assembly of the protein constituents of a yeast cell and concluded that the number of possible non-functional configurations vastly exceeds the number of functional ones, and that the functional interactome could only have emerged by an iterative hierarchical assembly of pre-existing sub-assemblies, never by random aggregation (McLeish, T. C. B., Cann, M. J., and Rodgers, T. L., 2012, The Levinthal paradox of the interactome, HFSP Journal, 6, 1-3).

What this means in practical language is that the cell as a working system did not assemble itself component by component, with each component being independently selected for some local function before the whole was built up. The cell, on the contrary, requires the simultaneous presence of a great many functioning components arranged in a particular relationship to one another in order to be a cell at all. There is no half-cell that confers half the function. There is a cell or there is no cell.

The implications for the chance hypothesis are decisive. Even if random chemistry could produce all the necessary proteins, the proteins must additionally interact in just the right ways to support cellular function. Multiplying through the probabilities pushes the result deeper into mathematical impossibility, by additional orders of magnitude that are themselves astronomical.

The Genome’s Hidden Depth: Alternative Splicing

When the first draft of the human genome was published in 2001, scientists were shocked to discover that humans have only about twenty thousand to twenty-three thousand protein-coding genes (International Human Genome Sequencing Consortium, 2001, Initial sequencing and analysis of the human genome, Nature, 409, 860-921). For comparison, the nematode worm Caenorhabditis elegans, an organism so simple that it is essentially a tube with neurons, has approximately twenty thousand genes. The same number, give or take. How could humans, with all our complexity, get by with the same number of genes as a worm?

The answer, which has become clearer over the past two decades, is that the human genome does enormously more with each gene than the worm genome does. The mechanism is alternative splicing. A typical human gene is composed of multiple coding regions called exons, separated by non-coding regions called introns. When a gene is transcribed, the introns are removed, and the exons are joined together to form the messenger RNA that codes for the protein. But the cell can choose, depending on context, which exons to include and which to leave out. A gene with seven exons can produce many different proteins by including exons one through five, or one and three through seven, or two through six, and so on.

Approximately ninety to ninety-five percent of human genes undergo alternative splicing (Wang, E. T., et al., 2008, Alternative isoform regulation in human tissue transcriptomes, Nature, 456, 470-476). The result is that the human body produces, from twenty thousand genes, well over one hundred thousand distinct protein isoforms (Ponomarenko et al., 2016). Some estimates run higher. The exact number is uncertain, but the order of magnitude is clear. The genome encodes not just a set of proteins, but a system for combinatorially generating proteins from modular components, with the choice of combination depending on cellular state, developmental stage, and environmental context.

This means that the genome is not a static blueprint. It is a generative grammar. It does not specify a fixed set of products; it specifies a system that produces different products under different conditions. To assemble such a generative grammar by chance requires not just stumbling upon working protein sequences, but stumbling upon a combinatorial system in which the sequences can be reshuffled to produce a coherent variety of working proteins, each appropriate to its specific use.

I will not bother trying to put a probability number on this. The number would be so far below what we have already established as effectively zero that it would be a meaningless decoration. The point is that the genome is several orders of complexity beyond what the chance calculations have so far been forced to confront.

The Epigenetic Layer: Above the Code

Above the genetic code, there is the epigenetic code. The epigenetic system consists of chemical modifications to DNA and to the histone proteins around which DNA is wound, modifications that control which genes are expressed, when, where, and at what rate. DNA methylation, histone acetylation, histone methylation, histone phosphorylation, and dozens of other chemical modifications interact in complex patterns to determine which parts of the genome are read in which cells at which times.

This system is not encoded in the DNA sequence itself. It is layered on top of the sequence, transmitted across cell divisions through specific molecular machinery, and modified by environmental and developmental signals. The epigenetic state of a stem cell is different from the epigenetic state of a neuron, even though the underlying DNA sequence is identical. The epigenetic state determines which proteins are made, and which proteins are made determines what kind of cell you are.

The epigenetic layer is itself a code, with its own grammar, its own readers and writers and erasers, its own combinatorial logic. Recent work has shown that the epigenome interacts with alternative splicing, with the histone modifications influencing which exons are included or excluded in the spliced messenger RNA (Luco, R. F., et al., 2010, Regulation of alternative splicing by histone modifications, Science, 327, 996-1000). This means there is not one code in the cell. There are at least two, layered, interacting, and each as complex as the other.

To produce a working organism by chance is not to produce a single blueprint. It is to produce two interacting codes, plus a system for generating proteins combinatorially from modular components, plus a system for ensuring those proteins fold correctly, plus a system for ensuring those proteins interact correctly, plus a system that propagates all of this faithfully across cell divisions and across generations, all of which must function from the very first instance because there is no half-functional intermediate that confers any survival advantage.

The Three-Dimensional City: Chromatin Organization

If the epigenome were not enough, there is one more layer that has only become visible in the past decade. The DNA in a cell is not a linear string. It is folded in three dimensions inside the nucleus, and the folding is not random. Specific regions of the genome are brought into physical proximity with other specific regions, even when those regions are far apart on the linear sequence, in order to enable regulatory interactions. The three-dimensional architecture of the genome is itself functional, and disruptions to it cause disease (Dekker, J., et al., 2017, The 4D nucleome project, Nature, 549, 219-226).

The chromatin organization includes structures called topologically associating domains, super-enhancers, chromatin loops, and chromosome territories. Each of these is a structural feature whose existence depends on specific protein machinery that recognizes specific sequences and brings them into specific spatial relationships. The folding is dynamic, changing during the cell cycle, during development, and in response to signals.

What this means is that the genome is not just an information storage device with a code. It is a four-dimensional information system, with the fourth dimension being time, in which the structure of the storage itself encodes information about how the stored content should be used. The architecture of the system is part of its function.

I will say it again, plainly. To produce by chance a working human cell, one must produce by chance: a 3.2-billion-base-pair sequence with sufficient functional content to support cellular life, a set of approximately one hundred thousand functional protein isoforms whose folding paths are biased toward functional conformations, a combinatorial splicing system that generates these isoforms from modular components, an epigenetic code that determines which genes are expressed in which contexts, a three-dimensional chromatin architecture that brings the right regulatory elements into the right physical relationships at the right times, and a system that propagates all of this with high fidelity across cell divisions and across generations.

The probability of producing all this by random chemistry, even given the entire age of the universe and every atom of matter as a possible site of a chemical experiment running every Planck time, is so far below mathematical impossibility that the word impossibility itself becomes too generous. There is no chance of this. There never was a chance of this. The hypothesis that this is what occurred is not a hypothesis. It is a stipulation maintained for non-empirical reasons, and the non-empirical reasons are themselves worth examining.

What Evolution Cannot Answer

The standard response to everything I have just written is that evolution by natural selection takes care of it. Random mutation provides variation, natural selection retains useful variants, and over deep time the genome gradually accumulates the complexity we observe. The argument has a long pedigree and a lot of cultural authority. It is also, in this context, a category mistake.

Natural selection acts on existing replicators. It cannot act before there is a replicator. The whole machinery of mutation, selection, and inheritance presupposes a self-replicating system that already has the capacity to copy information, retain useful variants, and pass them on. Until that machinery exists, there is no selection. There is only chemistry. And chemistry on its own is not a selection mechanism. It is a set of physical processes that move toward thermodynamic equilibrium, which is the opposite of what life requires.

The minimum self-replicating system, on the best current evidence, is the kind of organism that the 2024 Moody study identified as the Last Universal Common Ancestor, or LUCA (Moody, E. R. R., et al., 2024, The nature of the last universal common ancestor and its impact on the early Earth system, Nature Ecology and Evolution, 8, 1654-1666). LUCA had a genome of at least 2.5 megabases, encoded approximately 2,600 proteins, and possessed a functional immune system. This is not a primitive intermediate. This is a fully formed cellular organism with a level of complexity comparable to modern bacteria.

For natural selection to begin operating, LUCA had to exist. LUCA’s existence cannot be explained by natural selection, because natural selection requires LUCA, or something at least as complex, to already be running. This is not a minor technicality. It is the foundational gap in the chance-plus-selection account of life, and it is a gap that no amount of additional evolutionary biology can fill, because evolutionary biology operates downstream of the gap.

Some have proposed that simpler self-replicators, RNA-based or other, preceded LUCA. The RNA world hypothesis posits a stage at which replicating RNA molecules existed before the more elaborate DNA-protein system was established. This is interesting and may be partly correct, but it does not solve the problem. It pushes the problem back one level. Now we need to explain how a self-replicating RNA molecule arose by chance. RNA molecules capable of self-replication are themselves complex. They require specific sequences, and the calculations of Keefe and Szostak in 2001 showed that finding even an ATP-binding RNA in random sequence space requires sampling on the order of ten to the eleventh sequences (Keefe, A. D., and Szostak, J. W., 2001, Functional proteins from a random-sequence library, Nature, 410, 715-718). And ATP-binding is a single, simple function. A self-replicating RNA is enormously more demanding.

Every step of the regression encounters the same wall. There is no version of the chance-plus-selection account that explains the origin of the first self-replicating system, because every self-replicating system that we know about, or can plausibly imagine, is itself too complex to arise by chance in the available time.

The Deep Time Fallacy

I want to address directly the most common rebuttal I hear when I make this argument: that the time available is so vast that even very improbable events become probable. This is a fallacy, and a clear one, but it is so widely repeated that it deserves a careful refutation.

The age of the universe is approximately fourteen billion years, which is roughly four times ten to the seventeenth seconds. The age of the Earth is approximately 4.5 billion years, which is roughly ten to the seventeenth seconds. Whichever you prefer, the available time is on the order of ten to the seventeenth seconds. Now, how many trials can be packed into this time? If we suppose that every atom in the observable universe, ten to the eightieth atoms, performs one chemical experiment per Planck time, ten to the forty-third per second, the total number of trials available since the beginning of the universe is approximately ten to the eightieth, times ten to the forty-third, times ten to the seventeenth, which is ten to the one hundred and fortieth.

This is a generous overestimate, because not every atom is engaged in chemistry every Planck time, and the universe was not in a state suitable for biological chemistry for most of its history. But even using this absurdly generous figure, we have ten to the one hundred and fortieth trials available. The probability of a single functional protein, on Axe’s number, is one in ten to the seventy-seventh. So far so good, we have enough trials, in principle, to produce one such protein.

But we need not one protein. We need the simultaneous presence of many proteins, each of which requires its own ten-to-the-seventy-seventh search through sequence space, each of which must moreover fold correctly and interact correctly with the others. The combined probability is not ten to the seventy-seventh times the number of proteins. It is ten to the seventy-seventh raised to the power of the number of proteins. For just one hundred proteins, the combined probability is ten to the seven thousand seven hundredth. We have ten to the one hundred and fortieth trials available, and we need ten to the seven thousand seven hundredth trials to have a reasonable chance. We are short by ten to the seven thousand five hundred and sixtieth.

Deep time is not infinite time. The numbers we are dealing with overwhelm any plausible amount of time and any plausible number of trial sites in the entire history of the universe. The universe, large and old as it is, is finite. The relevant probabilities are not.

Information Cannot Arise from Noise

I now want to step back from the specific calculations and address the deeper philosophical issue, because the philosophical issue is in some ways more fundamental than the arithmetic. The arithmetic merely shows that chance fails. The philosophy shows why chance must fail, regardless of the specific numbers.

Information, in the technical sense, is a measure of how much a message reduces uncertainty about which of several possible messages was sent. A random sequence carries no information, because any random sequence is as likely as any other, and so observing one tells you nothing about which one was selected from the possibility space. A non-random sequence carries information because the deviation from randomness, the statistical signature of selection, tells you that some process chose this sequence rather than another.

The genome is a non-random sequence. Its non-randomness is not subtle. It is highly specific, highly structured, hierarchically organized, and functionally integrated. The statistical signature of the genome is not the signature of a random process operating on chemical building blocks. It is the signature of a process that selected this sequence from an astronomical possibility space.

Now, here is the philosophical point. A random process cannot produce information. This is not a contingent observation. It is a definitional truth. A random process, by definition, is one that does not preferentially select among the possibilities. If a process preferentially selects, it is not random; if it does not preferentially select, it cannot produce non-randomness. The information in the genome must therefore come from a non-random source. Natural selection is non-random and is therefore one possible source. But natural selection requires the prior existence of a self-replicating system, as we have already established. Before that system existed, the only sources of order available were physical laws and chance. Physical laws produce regularity, not information; chance produces noise. Neither is information.

The conclusion is not that life requires a magical creator. The conclusion is that life requires an information source that existed before life itself, and the only information sources we know of, the only sources of high-specificity non-randomness, are intelligence and the products of intelligence. This is not a religious claim. It is an empirical observation about where information comes from, in every case in which we can examine the source.

You may object that perhaps there are sources of information we have not discovered, that physics has unsuspected resources, that the laws of nature themselves carry information. These are interesting suggestions, and they deserve investigation. But they push the question back, they do not answer it. If the laws of nature themselves carry information that produces life, where did the laws come from? If the universe has built-in informational tendencies, where did those tendencies come from? At every level of analysis, the question is the same: information has a source, and the source is either intelligence or something that functions like intelligence under a different name.

The Universal Genetic Code: One Choice, Frozen Forever

Among the many features of biological information that demand explanation, one stands out as particularly difficult for the chance hypothesis: the universality of the genetic code. Every known organism on Earth, from the deepest-sea bacteria to the most complex mammals, uses the same genetic code with only minor exceptions. The same triplet of nucleotide bases codes for the same amino acid in a human cell, in a maize plant, in a roundworm, in a streptococcus.

This universality is striking because the code is essentially arbitrary. There is no chemical necessity that the triplet AUG must code for methionine. Many other assignments would work equally well from a chemical standpoint. The choice of which triplet codes for which amino acid was made once, at the origin of life, and has been frozen ever since (Koonin, E. V., and Novozhilov, A. S., 2009, Origin and evolution of the genetic code: The universal enigma, IUBMB Life, 61, 99-111).

The implication is unavoidable. All life on Earth descends from a single common ancestor, the LUCA we already discussed, and the code that LUCA used was passed on faithfully to every descendant lineage for four billion years. There was no opportunity for the code to be reinvented in different lineages, because any change to the code would corrupt the readout of every gene in the genome simultaneously. Once the code was set, it had to stay set.

This is significant because it means we are not looking at a process that experimented with many codes and selected the best one. We are looking at a single founding event in which one code was established and then preserved. The specific code that was established is not a generic code. It is, on careful examination, a code that minimizes the deleterious effects of random mutations, in the sense that mutations to a base in a codon often produce either no change in amino acid or a change to a chemically similar amino acid, which limits the damage. This non-randomness in the code itself, this property of error tolerance, is itself improbable on a chance hypothesis.

To produce by chance a code that is itself optimized for error tolerance, before any selection has had the opportunity to act on it, is another improbability that compounds the others. The chance hypothesis must therefore account not only for the existence of life, not only for the specific genetic information of LUCA, but for the existence of a genetic code that was on the first day a near-optimal code given the constraints of biochemistry. That is a great deal to ask of chance.

The Molybdenum Problem

Francis Crick, the Nobel laureate who co-discovered the structure of DNA, and the chemist Leslie Orgel published in 1973 in the journal Icarus a paper titled Directed Panspermia (Crick, F. H. C., and Orgel, L. E., 1973, Directed Panspermia, Icarus, 19, 341-346). In that paper, they argued that life on Earth was likely seeded by an extraterrestrial intelligence, and they offered as one of their two main pieces of evidence the observation that biological systems on Earth are dependent on molybdenum to a degree disproportionate to its abundance on Earth.

Molybdenum is a relatively rare element on Earth, present at about 0.02 percent of the crust by mass. Yet it plays an essential role in many biochemical processes, including nitrogen fixation, sulfite oxidation, and various other enzymatic reactions. Crick and Orgel pointed out that this disproportionate dependence on a rare element is what one would expect if life evolved on a planet where molybdenum was abundant, and was then transported to Earth, rather than if life originated here, where one would expect biochemistry to favor more abundant elements.

This argument is not decisive. Other explanations for the molybdenum dependence are possible. But it is suggestive, and combined with the universal genetic code, it gives us two anomalies that point in the same direction. The most natural interpretation of both anomalies is that life on Earth has a single, foreign origin, that the foundational biochemistry was established once, somewhere, and was then transferred to this planet as a complete and functioning system.

The Einstein Moment

I want to step entirely outside the biology now and consider this question from the perspective of physics, in particular from the perspective that Einstein, late in his life, articulated when he was confronted with the question of why the universe is intelligible at all. Einstein wrote in 1936 that the most incomprehensible thing about the universe is that it is comprehensible (Einstein, A., 1936, Physics and Reality, Journal of the Franklin Institute, 221, 349-382). What he meant was that there is no logical reason why the universe should be ordered in such a way that mathematical reasoning conducted by the human mind should be able to predict the behavior of physical systems. The universe could have been chaotic. It could have had laws that change from place to place and time to time. It could have been such that no general principles applied. But it is not. It is ordered, and the order is exactly the kind of order that mathematical reasoning can grasp.

Eugene Wigner, the Nobel-winning physicist, made the same point in his 1960 essay The Unreasonable Effectiveness of Mathematics in the Natural Sciences (Wigner, E., 1960, The Unreasonable Effectiveness of Mathematics in the Natural Sciences, Communications in Pure and Applied Mathematics, 13, 1-14). He noted that abstract mathematical structures, developed by mathematicians for purely intellectual reasons with no thought of physical application, turn out repeatedly and unexpectedly to describe the deep structure of physical reality. This is not what we would expect from a universe that arose without any informational input. It is what we would expect from a universe in which information is built in at the level of the laws of nature themselves.

Now combine this with what we have just established about biology. The same universe that has unreasonable mathematical structure also produces, on at least one of its planets, organisms that are themselves repositories of vast amounts of highly specific information, whose probability of arising by chance from physical chemistry is mathematically zero. The two facts are connected. They are facts about the same universe, and they point in the same direction. The universe carries information at every level we can examine, from the laws of physics to the genomes of cells. The information has to come from somewhere. The chance hypothesis cannot supply it, because chance is the absence of an information source.

I do not know what the source is. I have my hypotheses, which I have laid out in other writings, and which converge on the conclusion that the foundational architecture of biological life on Earth was established by an intelligence that did not originate on Earth. But the larger philosophical claim, the claim that I think is genuinely a milestone in clear thinking, is that the chance hypothesis as commonly understood is not a hypothesis. It is an anti-hypothesis, a refusal to ask the question, a stipulation that the explanation is not to be sought because the alternatives are uncomfortable.

Real science does not work that way. Real science follows the data, and the data on the origin of biological information leads us inescapably away from chance.

Be Aware of the Probability

Up to this point I have been naming numbers. Ten to the seventy-seventh. Ten to the forty-thousandth. Ten to the one-hundred-billionth. These numbers are so large that they cease to be numbers and become symbols. The human brain reads them and does not understand them, and that is no failing of the reader, but a biological fact. The human nervous system evolved to grasp magnitudes relevant to daily life: the number of berries on a bush, the distance to the nearest watering hole, the number of members in a group. It did not evolve to intuitively grasp numbers like [math]10^{77}[/math].

I am going to correct this now. I am going to walk you through a series of concrete comparisons, each of which is graspable on its own, and then by chaining the comparisons together I will give you a sense of what the actual numbers require. Here I show you the most brilliant side of my thinking, and at the end of it, a thesis that in my view requires no further discussion, because it is not a matter of opinion but a matter of definition.

Let us begin small

The number of grains of sand on all the beaches of Earth is estimated at approximately [math]10^{18}[/math]. A large number, but not unimaginable. If we counted all the sand grains one at a time, one grain per second, it would take approximately 30 billion years, or about twice the age of the universe. With our entire lifespan, we would not count even a microscopic fraction of them.

The number of stars in the observable universe is estimated at approximately [math]10^{24}[/math]. That is one million times more than all the sand grains on Earth. This number already escapes intuition. If every star had an Earth, we would have a million times as many sand grains as on our Earth, and that is only because we assumed one Earth per star.

The number of atoms in the observable universe, all atoms, in all stars, in all planets, in all gas between the galaxies, is:

[math]N_{\text{atoms}} approx 10^{80}[/math]

That number simply means: every piece of matter in the entire observable universe, summed. At this point, every intuitive comparison system fails. We simply remember: all matter, all stars, all galaxies, ten to the eightieth.

Now to the probability of a single protein

Axe’s calculation gives us, for a single functional protein of 150 amino acids:

[math]P_{\text{protein}} = \frac{1}{10^{77}}[/math]

What does that really mean? Imagine taking every single atom in the observable universe, all [math]10^{80}[/math] atoms, and painting one of them red. Then all atoms are mixed together, and you reach in blindfolded and grab exactly one. The probability of grabbing the red atom is one in [math]10^{80}[/math].

The probability of producing a single functional protein by chance is only about one thousand times higher than that. That is not reassuring. One thousand times higher than nearly impossible is still nearly impossible. And that is for a single protein.

The lottery comparison everyone understands

Perhaps more graspable through an example many people know: the Powerball lottery in the United States has a jackpot probability of approximately one in three times ten to the eighth, roughly [math]10^{8.5}[/math]. Anyone who hits the jackpot once is a multi-millionaire. Anyone who hits the jackpot three times in a row, without the lottery commission calling the police, has a probability of [math]10^{25.5}[/math].

To match the probability of a single functional protein, you would need to win the Powerball jackpot nine times in a row:

[math]P_{\text{9 Powerballs}} = (10^{-8.5})^9 = 10^{-76.5} approx P_{\text{protein}}[/math]

Nine times in a row. No manipulation, no insider knowledge, pure chance. That is the probability for one functional protein. Now think one step further: the human genome encodes for approximately one hundred thousand functional protein isoforms. What happens then?

The multiplication of improbability

As soon as we want to produce two or more independent proteins by chance, their individual probabilities multiply:

[math]P_{\text{total}} = (P_{\text{protein}})^N = (10^{-77})^N = 10^{-77N}[/math]

For only ten proteins:

[math]P_{10} = 10^{-770}[/math]

For one hundred:

[math]P_{100} = 10^{-7{,}700}[/math]

For the approximately 2,600 proteins that LUCA already had, the oldest reconstructable ancestor of all life:

[math]P_{\text{LUCA}} = 10^{-200{,}200}[/math]

A number with two hundred thousand zeros before the one. If you wanted to print this number on regular paper at the usual font size, it would fill approximately fifty pages with zeros alone.

The available trials

How many trials did the universe have available to overcome this probability? I take the most generous conceivable estimate. Every atom in the universe, all [math]10^{80}[/math] atoms, performs one chemical experiment per Planck time, that is [math]10^{43}[/math] trials per second. The age of the universe is approximately [math]4 \times 10^{17}[/math] seconds.

[math]T_{\text{available}} = 10^{80} \times 10^{43} \times 10^{17} \times 4 approx 10^{140}[/math]

This number is absurdly generous. Atoms in the interior of stars are not engaged in organic chemistry. Atoms in intergalactic space have never met other atoms. Atoms during the first 13 billion years of cosmic history had no Earth on which to experiment. But let us play the game generously.

We have [math]10^{140}[/math] trials available.

We need [math]10^{200{,}200}[/math] trials to have a reasonable chance at LUCA.

[math]\frac{T_{\text{needed}}}{T_{\text{available}}} = \frac{10^{200{,}200}}{10^{140}} = 10^{200{,}060}[/math]

We are short by a factor of [math]10^{200{,}060}[/math]. This gap is not closable. It is not closable by additional time, because the universe does not exist for that long. It is not closable by additional trials per second, because the Planck time is the fundamental lower bound. It is not closable by additional atoms, because the universe contains no more atoms. We have exhausted the maximum that a finite universe can offer, and the maximum falls short by [math]10^{200{,}060}[/math].

Hoyle’s number visualized

Hoyle’s calculation gave us:

[math]P_{\text{Hoyle}} = \frac{1}{10^{40{,}000}}[/math]

This number is still devastating, but it is orders of magnitude more optimistic than the LUCA-based calculation, because Hoyle considered only 2,000 enzymes of a minimal cell and excluded the complexity of modern proteins, alternative splicing, epigenetics, and three-dimensional chromatin architecture. Even Hoyle, who delivered the most optimistic serious calculation available, arrives at a number that exceeds the universe by forty thousand orders of magnitude.

Forty thousand orders of magnitude.

To put that in pictures: imagine the entire observable universe with all its [math]10^{80}[/math] atoms is a single atom in a larger universe. This larger universe contains another [math]10^{80}[/math] such atoms. We are now at [math]10^{160}[/math]. We do this five hundred times in a row.

[math]underbrace{10^{80} \times 10^{80} \times ldots \times 10^{80}}_{500 \text{ \times}} = 10^{40{,}000}[/math]

Five hundred nested universes-of-universes-of-universes. That is Hoyle’s number. That is the probability that a self-declared atheist calculated, because he made the mathematics honestly and refused to flinch from the consequence.

My thesis, which requires no further discussion

Here I formulate what I consider my most important contribution to this debate. A thesis that is not further to be discussed, because it is not a matter of opinion but a matter of definition.

Probability, as a mathematical concept, is only a meaningful category when the event space is commensurable with the trials available. When an event requires [math]10^{200{,}000}[/math] trials and the universe can supply [math]10^{140}[/math] trials, then the statement “the event is very improbable” is a verbal trivialization. The correct statement is: the event cannot occur through the mechanisms the universe makes available.

That is not a probability statement. That is a possibility statement. It is the difference between “rare” and “physically excluded.”

Here lies my contribution, my milestone, if history should treat it as such, which is for history to decide. My contribution is the categorical claim that biological information cannot arise through random processes within this universe. Not “improbable.” Not “extremely rare.” But structurally excluded by the finiteness of the universe in conjunction with the combinatorial size of the biological search space.

This statement stands on its own. It is not refutable by proposing new mechanisms of natural selection, because natural selection can only act after the establishment of the self-replicating system. It is not refutable by appeals to “deep time,” because deep time is finite and the time required here lies far beyond any conception of finite time. It is not refutable by metaphysical appeals, because the statement is purely mathematical.

Anyone wishing to disagree must defend one of the following positions: that the observable universe contains more than [math]10^{80}[/math] atoms, that the Planck time is shorter than commonly estimated, that the universe is substantially older than 14 billion years, or that the complexity estimates for LUCA and the human genome are too high by many thousands of orders of magnitude. None of these positions is compatible with the data currently available.

Therefore: anyone who maintains the chance hypothesis is maintaining no hypothesis, but a stipulation. And stipulations, in science, are not a substitute for arguments.

My case is closed.

Where I Stand

Let me now state my position as clearly as I can. The probability that the human genome arose by random chemical processes, with or without the subsequent operation of natural selection, is mathematically indistinguishable from zero. The probability that the foundational genetic system of any life form, even the simplest, arose by random chemical processes is mathematically indistinguishable from zero. The numbers are not close. They are not even in the same arithmetic universe. The chance hypothesis fails, not by a hair, but by a margin so large that it is not a hypothesis at all.

This conclusion does not require religious commitment. It does not require any specific alternative theory. It does not require accepting any creation narrative from any tradition. It requires only that one follow the mathematics where the mathematics goes, and the mathematics goes far past the point at which any honest inquirer must concede that something other than chance is responsible for the information observed in living systems.

What that something is remains an open question, and it should remain an open question, because the data does not yet allow us to decide between several possible answers. It might be that the universe itself has informational structure built into its laws in a way that we have not yet understood, and that this informational structure tends to produce life under appropriate conditions. It might be that life originated elsewhere in the universe and was transferred here, and that the elsewhere had conditions or mechanisms more favorable to the origination of biological information than Earth has. It might be that life was deliberately initiated on Earth by an intelligence whose origin we cannot currently access. Or it might be something we have not yet conceived of, something that future generations will name and we will not recognize.

What is no longer open is whether life arose by random chemistry on a primordial Earth. That hypothesis is mathematically dead. It has been dead for fifty years. The reason it is still treated as the default in textbooks is not scientific. It is institutional. It is the same kind of inertia that kept geocentrism in place for centuries after Copernicus, and that kept the steady-state universe in textbooks decades after the cosmic microwave background made it untenable. Eventually the inertia gives way. We are in the late stages of that process now, and articles like this one are part of how the giving way happens.

The Closing

I said at the beginning that I would set out my position so no one had to wonder where I was going. I have now done that. My position is that the human genome did not arise by chance, that no genome arose by chance, that the chance hypothesis is mathematically excluded by the actual numbers, and that the search for the actual mechanism by which biological information came into being is the most important open question in the natural sciences.

I am writing this not to convince anyone, because the people who are committed to the chance hypothesis will not be convinced by anything, and the people who are not committed to it do not need to be convinced. I am writing this for the third group, the people who have been told that the chance hypothesis is the consensus and have not had the time or training to look into the actual mathematics. Once you look into the actual mathematics, the chance hypothesis collapses. It is not a small collapse. It is a collapse on the order of forty thousand orders of magnitude, on Hoyle’s calculation, and considerably worse than that on the more recent estimates that take the full complexity of the genome into account.

I have no patience for the response that says these calculations are wrong because evolution actually works differently. I am not arguing against evolution. Evolution operates on the genome that already exists. My argument is about the origin of the genome itself, before any selection had a substrate to act upon. On that question, evolutionary theory is silent, and the silence is not a small detail. It is the heart of the matter.

I have been thinking about this for a very long time. I have been afraid to write it for almost as long, because I knew the response it would draw. The response will come. It will be loud, and it will be confident, and it will mostly miss the point. That is fine. The numbers are the numbers. The probability is zero. The genome did not arise by chance. Where it came from, we do not yet fully know. That is the question. And it is, in my honest assessment, the most important question that humans can ask about themselves.

I rest my case here. The numbers speak for themselves. Anyone who wishes to disagree is welcome to do the arithmetic for themselves. I am confident in the result.

This article is one person’s argument, set out as carefully as I can make it. It is not a peer-reviewed paper. It is a synthesis of decades of reading, calculation, and reflection. The conclusion is one I would have preferred not to reach, because it commits me to positions that are unpopular and that will draw criticism from people whose respect I value. I reached it anyway, because the mathematics left me no choice. If you disagree, do the math yourself. I am happy to be shown wrong, but I am not happy to be shouted down, and the difference matters.

References

Axe, D. D. (2004). Estimating the prevalence of protein sequences adopting functional enzyme folds. Journal of Molecular Biology, 341, 1295-1315.

Crick, F. H. C., and Orgel, L. E. (1973). Directed Panspermia. Icarus, 19, 341-346.

Dekker, J., et al. (2017). The 4D nucleome project. Nature, 549, 219-226.

Eden, M. (1967). Inadequacies of neo-Darwinian evolution as a scientific theory. In P. S. Moorhead and M. M. Kaplan (Eds.), Mathematical Challenges to the Neo-Darwinian Interpretation of Evolution. Wistar Institute Press.

Einstein, A. (1936). Physics and Reality. Journal of the Franklin Institute, 221, 349-382.

Hoyle, F., and Wickramasinghe, N. C. (1982). Evolution from Space: A Theory of Cosmic Creationism. Simon and Schuster.

International Human Genome Sequencing Consortium (2001). Initial sequencing and analysis of the human genome. Nature, 409, 860-921.

Keefe, A. D., and Szostak, J. W. (2001). Functional proteins from a random-sequence library. Nature, 410, 715-718.

Koonin, E. V., and Novozhilov, A. S. (2009). Origin and evolution of the genetic code: The universal enigma. IUBMB Life, 61, 99-111.

Levinthal, C. (1969). How to fold graciously. In Mossbauer Spectroscopy in Biological Systems. University of Illinois Press.

Luco, R. F., et al. (2010). Regulation of alternative splicing by histone modifications. Science, 327, 996-1000.

McLeish, T. C. B., Cann, M. J., and Rodgers, T. L. (2012). The Levinthal paradox of the interactome. HFSP Journal, 6, 1-3.

Moody, E. R. R., et al. (2024). The nature of the last universal common ancestor and its impact on the early Earth system. Nature Ecology and Evolution, 8, 1654-1666.

Morowitz, H. J. (1968). Energy Flow in Biology. Academic Press.

Ponomarenko, E. A., et al. (2016). The Size of the Human Proteome: The Width and Depth. International Journal of Analytical Chemistry, 7436849.

Wang, E. T., et al. (2008). Alternative isoform regulation in human tissue transcriptomes. Nature, 456, 470-476.

Wigner, E. (1960). The Unreasonable Effectiveness of Mathematics in the Natural Sciences. Communications in Pure and Applied Mathematics, 13, 1-14.